X-AI

Explains why models predict patterns by highlighting key drivers and local effects, improving trust and scientific insight.

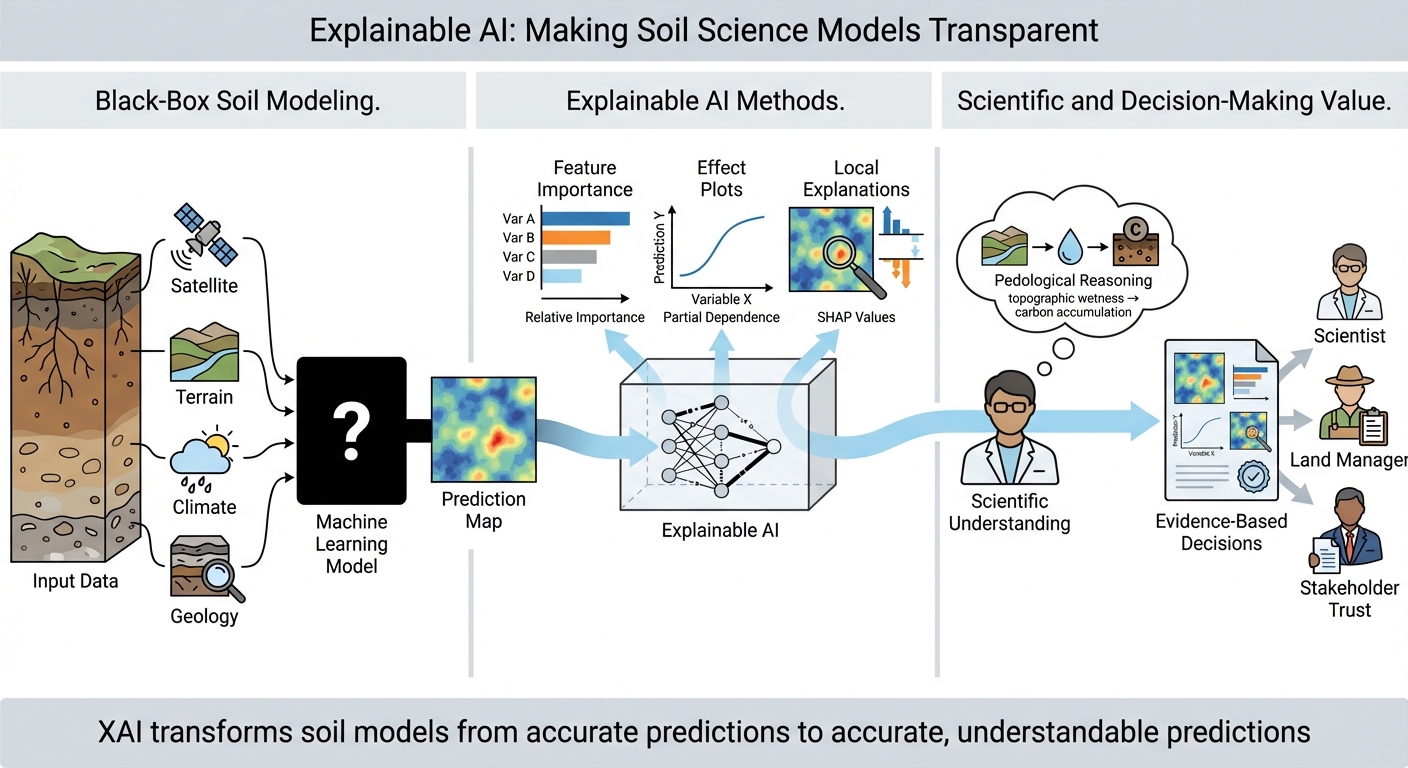

This project makes soil machine learning more transparent by adding interpretability and explainability to mapping workflows. We pair predictive models with global and local explanations that reveal key covariates, dominant gradients, and location-specific drivers behind predictions. Explanations are checked alongside spatially informed validation to reduce spurious conclusions from spatial autocorrelation or sampling bias. Outputs include soil maps plus clear driver summaries to support trust, scientific interpretation, and decision-making.

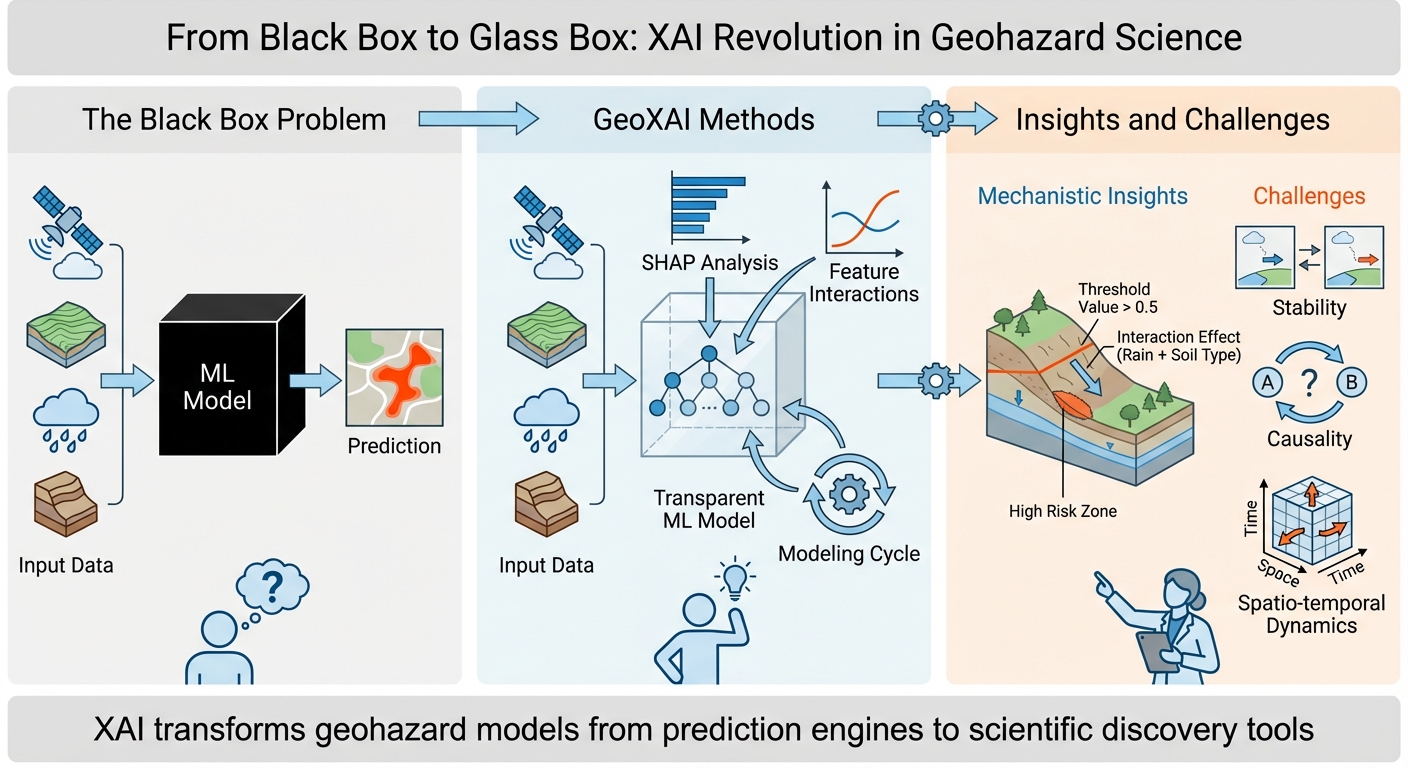

Main focus

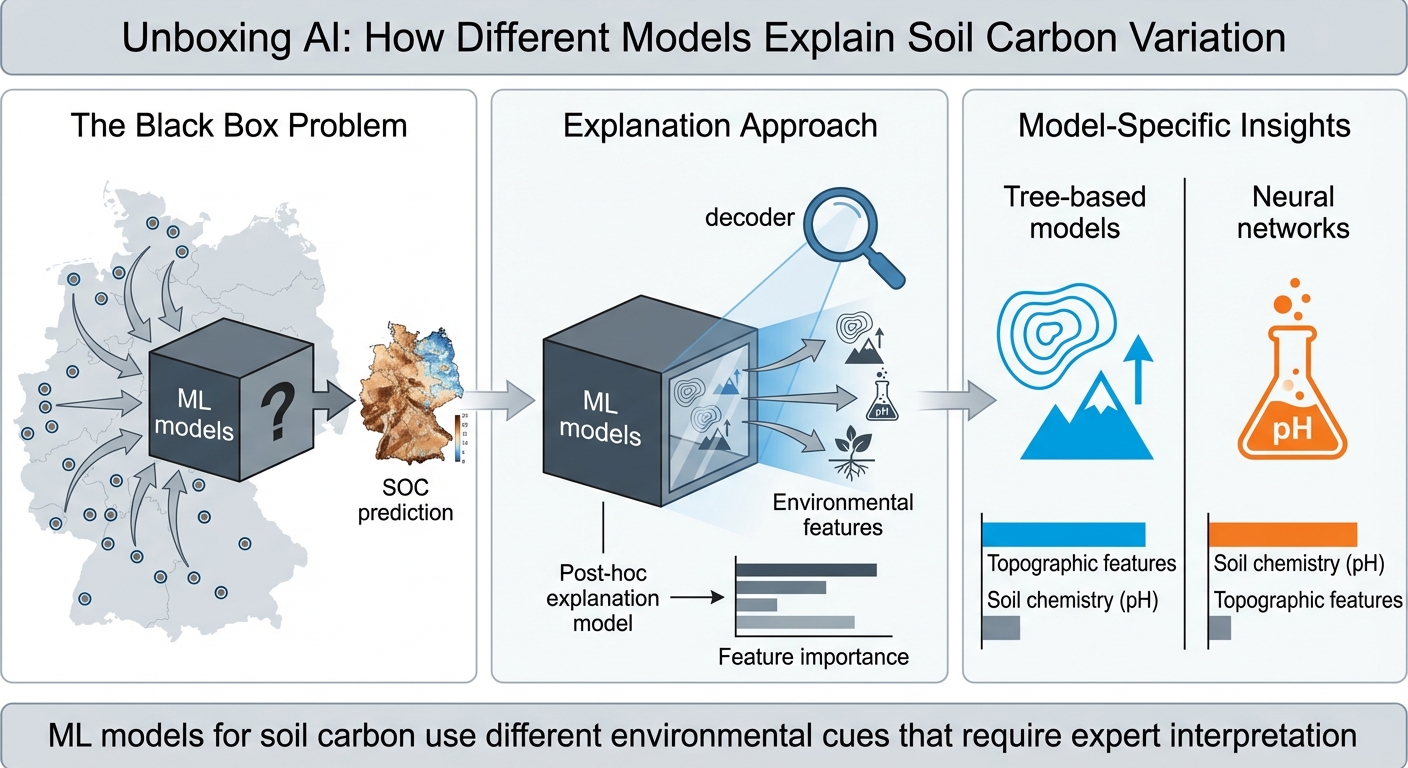

- Turn “black-box” soil models into explainable, trusted workflows.

- Connect model behavior to soil–landscape processes and mechanisms.

- Communicate reliability and avoid overinterpretation in weak-support areas.

Objectives

- Generate global and local explanations of soil predictions and drivers.

- Evaluate explanation stability under spatial validation and transfer settings.

- Deliver maps + driver summaries that improve interpretation and usability.

Graphical abstract

:::: {.row .justify-content-sm-center} ::: {.col-sm-10 .mt-3 .mt-md-0}

::: ::::

::: caption Graphical abstract summarizing the workflow and key results. :::

Papers (selected)

Paper 1 — Explainable AI for Interpreting SOC Prediction

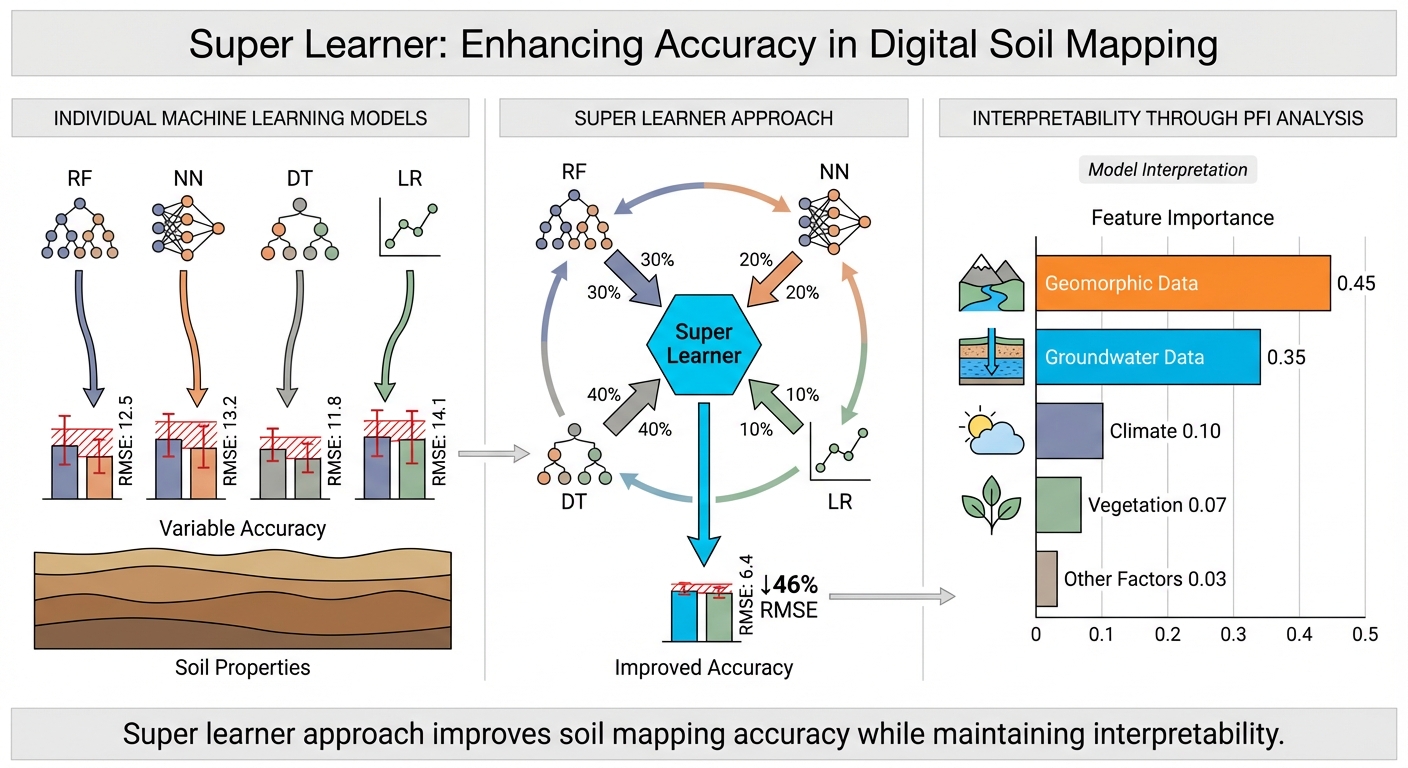

Paper 2 — Super Learner Boosts ML Accuracy in DSM

Paper 3 — Explainable AI in Geohazards: A Systematic Review