Extrapolation

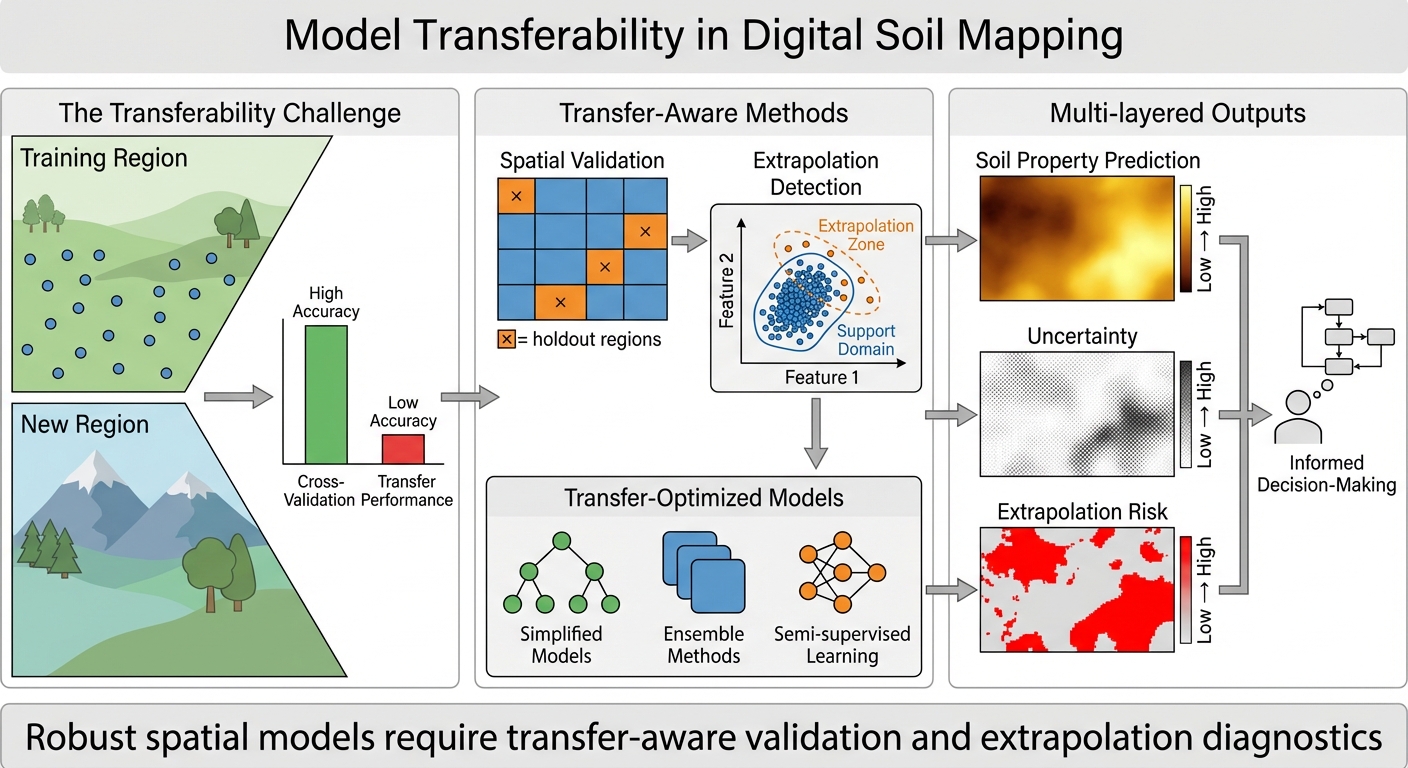

Tests model generalization across regions and scales, mapping where predictions extrapolate and where uncertainty increases.

This project focuses on whether spatial prediction models remain reliable when applied beyond their training conditions—across new regions, scales, and covariate combinations. We use spatially robust validation designs (blocked CV, region holdouts) and diagnose where models extrapolate outside the training “applicability domain.” Outputs include prediction maps plus extrapolation-risk or similarity layers that show where results are well supported and where uncertainty should be higher. The aim is more trustworthy soil mapping under real deployment scenarios.

Main focus

- Improve generalization of soil models across space, scale, and data shifts.

- Detect and communicate extrapolation risk and applicability domain limits.

- Support safer use of maps in unsampled or novel environments.

Objectives

- Test model transfer with spatial validation and region-based holdouts.

- Map covariate coverage and identify out-of-distribution prediction zones.

- Deliver prediction + extrapolation-risk layers to guide interpretation/sampling.

Graphical abstract

:::: {.row .justify-content-sm-center} ::: {.col-sm-10 .mt-3 .mt-md-0}

::: ::::

::: caption Graphical abstract summarizing the workflow and key results. :::

Papers (selected)

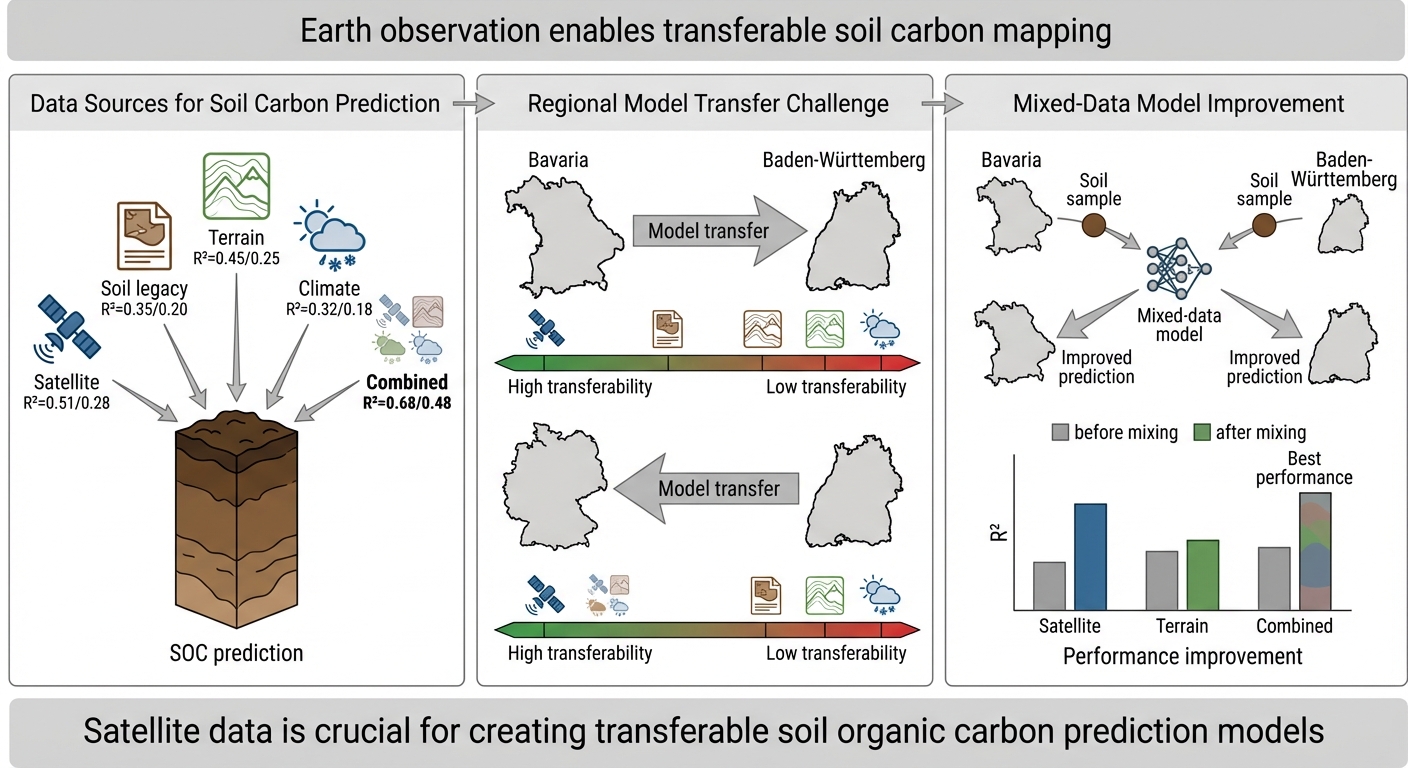

Paper 1 — Covariate Transferability for Cropland SOC Prediction

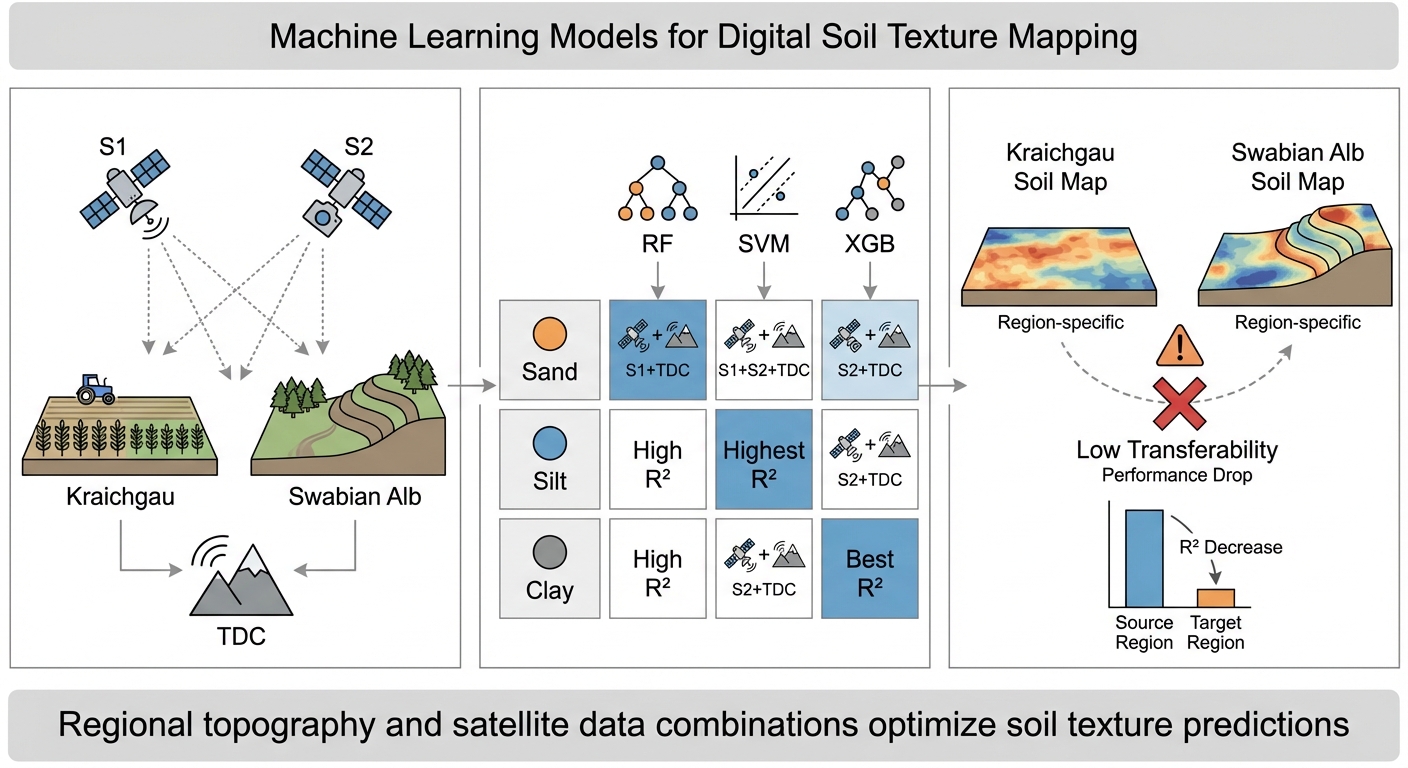

Paper 2 — Model Transferability Using Sentinel and Terrain Covariates

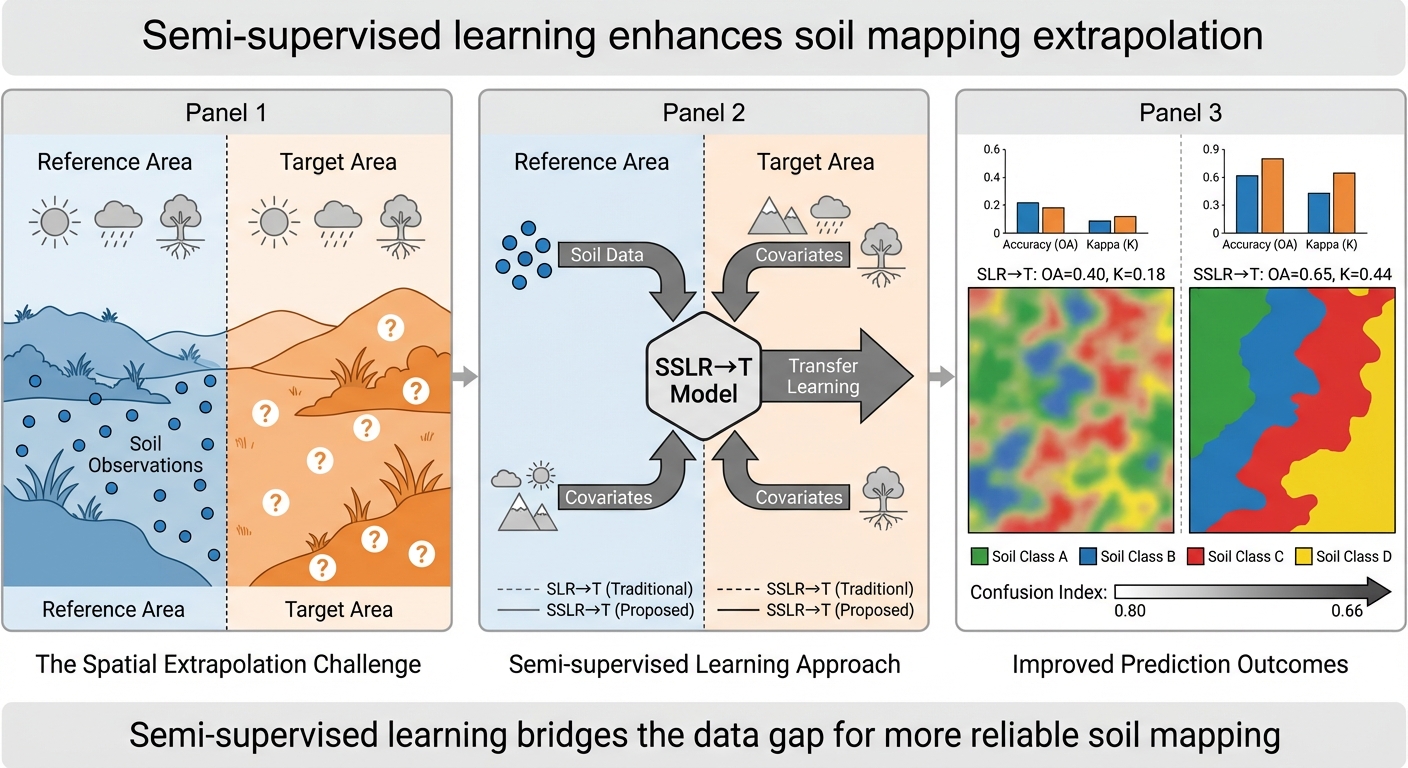

Paper 3 — Semi-Supervised Learning for Spatial Extrapolation